Apollo & Dionysus#

Neuroendocrine Gating and the Architecture of Control: From Thalamocortical Filtering to GLP-1 Modulation

The body and brain are replete with gating mechanisms, ensuring that only the most salient signals reach conscious awareness, motor output, or metabolic response. Whether in sensory perception, executive control, or metabolic regulation, we see recurring patterns of filtration: inhibitory and excitatory balances that determine the priority of stimuli. The thalamocortical system, governing the flow of sensory information to the cortex, exemplifies one such mechanism, ensuring that perception is neither overwhelmed by noise nor deprived of crucial signals. In parallel, GLP-1 agonists operate as metabolic gating agents, filtering out excessive hunger cues and modulating reward pathways. Each serves as a regulator of excess, constraining chaotic input into a manageable stream of decision-relevant information. To explore these similarities rigorously, we map them onto the architecture of the neural network model, tracing the interplay of world, perception, agency, equilibrium, and execution.

At the level of cosmos and planet, the most fundamental layer of our network, we recognize the necessity of constraints in maintaining systemic balance. Just as a planet’s gravitational field dictates the trajectories of celestial bodies within its orbit, regulatory gating structures provide the necessary parameters for physiological homeostasis. The thalamus does not indiscriminately relay sensory inputs but selectively amplifies or dampens them based on attentional demands. Likewise, GLP-1 does not indiscriminately suppress appetite but fine-tunes energy balance through interactions with the hypothalamus and brainstem. These systems operate as universal filters, ensuring that an organism does not overconsume sensory data or metabolic resources beyond its capacity for processing. Such filtration is not an incidental feature of intelligence but its precondition.

Fig. 19 An essay exploring the relationship between servers, browsers, search mechanics, agentic models, and the growing demand for distributed compute. You’re setting up a discussion that touches on the architecture of information retrieval, the role of AI as an autonomous agent in querying and decision-making, and the economic implications of compute optimization. The key tension lies in the shift from user-initiated queries to AI-driven agentic interactions, where the browser becomes less of a search engine interface and more of an intermediary between users and computational agents. The equation Intelligence = Log(Compute) suggests a logarithmic efficiency in intelligence gains relative to compute expansion, which could be an interesting angle to explore in light of the exponential growth in AI capabilities.#

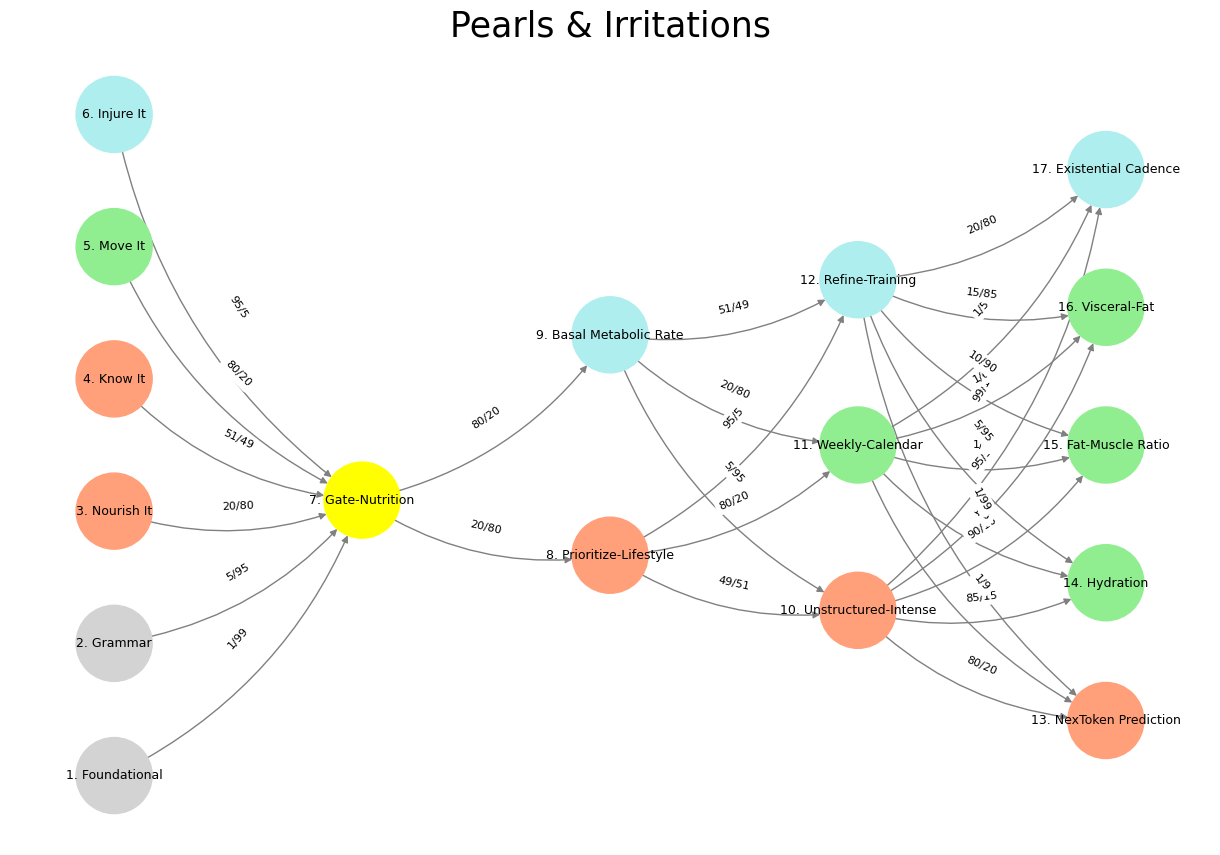

Perception and compression, represented by the yellow node, consolidate chaotic information into digestible streams. Thalamocortical gating is, in essence, a compression algorithm for sensory input, reducing redundant or unimportant signals so that cognition can focus on what matters. GLP-1 agonists similarly compress metabolic signaling, dampening the noise of hedonic hunger and stabilizing homeostasis. Without such constraints, perception and energy intake would spiral into dysfunction—hyperstimulation of sensory pathways would lead to hallucinations and sensory flooding, while unregulated caloric consumption would drive metabolic disease. The yellow node thus stands as the first point of convergence for both sensory and metabolic gating, marking the threshold where signal modulation begins.

The transition to agentic capability—the realm of ascending and descending networks (N1-N5)—introduces bidirectional feedback loops between control centers and output systems. The thalamus is not merely a passive relay but a structure that interacts dynamically with cortical regions, adjusting its gating based on higher-order cognition. Similarly, GLP-1 modulation is not a one-way suppression of appetite but a bidirectional influence on reward pathways, shifting dopaminergic activity in the ventral tegmental area (VTA) and nucleus accumbens. These mechanisms embody a negotiation between inhibition and excitation, echoing the structure of our ascending (activation) and descending (inhibitory) tracts in neural processing. Just as the descending corticospinal pathways refine movement by suppressing maladaptive reflexes, GLP-1 agonists refine metabolic behavior by suppressing compulsive intake while preserving necessary consumption.

At the level of generative equilibria, where the sympathetic and parasympathetic systems balance the organism’s broader state, we find the strongest analogy between GLP-1 modulation and thalamocortical filtering. The thalamic reticular nucleus (TRN) acts as an inhibitory sheath around the thalamus, preventing excessive sensory excitation and regulating attentional transitions. This directly parallels GLP-1’s ability to curb hedonic overeating by dampening reward system overactivity. In both cases, a higher-order regulatory function limits excess stimulation. The deeper question emerges: are these systems merely inhibitory, or do they serve as strategic allocators of attention and energy? The answer lies in their ability to fine-tune excitability rather than merely suppress it. In other words, neither thalamocortical gating nor GLP-1 agonism is about shutting down activity—it is about ensuring that the right activity is amplified at the right time.

A table crystallizes this analogy, clarifying the parallel functions across both domains:

Feature |

Thalamocortical Gating |

GLP-1 Agonists |

|---|---|---|

Primary Function |

Filters sensory information before cortical processing |

Filters metabolic signals before reward/motivation responses |

Modulated Signals |

Sensory input, attention, and arousal |

Hunger, reward sensitivity, systemic metabolism |

Key Structures |

Thalamus, Reticular Nucleus, Cortex |

Hypothalamus, Brainstem, Cortex |

Neurotransmitters |

GABA, Glutamate, Acetylcholine |

GLP-1, Dopamine, Serotonin |

Effects of Dysfunction |

Schizophrenia, ADHD, Sensory Overload |

Obesity, Metabolic Syndrome, Cognitive Impairment |

This balance between suppression and enhancement reveals an implicit optimization principle. The body does not seek to maximize signal but to refine it. The same logic applies to artificial intelligence. If we were to extend this analogy to transformers and attention mechanisms, we might recognize that the self-attention layers in GPT models function as computational analogs of these neuroendocrine filters. A transformer does not process every word equally; it assigns weighted importance, allowing the most relevant tokens to guide subsequent inference. In the same way, the thalamus and GLP-1 systems selectively modulate what matters, ensuring that perception, behavior, and metabolism are directed toward meaningful rather than distracting stimuli.

Execution in time—the final layer of our neural model—concerns the actualization of these filtered processes. In a functioning organism, thalamocortical gating results in coherent perception, motor responses, and cognitive stability, while GLP-1 modulation results in regulated metabolic behavior. The execution of these refined signals defines behavioral and physiological equilibrium. Just as the execution layer of a neural network is where the decision is instantiated, so too does the execution layer of biological systems translate regulatory control into real-world outcomes. Without these gating mechanisms, both sensory and metabolic domains would collapse into disorder.

Ultimately, the recurring theme is optimization through selective inhibition. Whether in the realm of sensation, metabolism, or artificial intelligence, intelligence emerges not from unfiltered access to raw data, but from the ability to gate, prioritize, and refine. The interplay of these systems suggests a deep structural resonance across biological and artificial intelligence. To gate is not to diminish—it is to create the conditions for intelligence itself.

Show code cell source

import numpy as np

import matplotlib.pyplot as plt

import networkx as nx

# Define the neural network layers

def define_layers():

return {

'Suis': ['Foundational', 'Grammar', 'Nourish It', 'Know It', "Move It", 'Injure It'], # Static

'Voir': ['Gate-Nutrition'],

'Choisis': ['Prioritize-Lifestyle', 'Basal Metabolic Rate'],

'Deviens': ['Unstructured-Intense', 'Weekly-Calendar', 'Refine-Training'],

"M'èléve": ['NexToken Prediction', 'Hydration', 'Fat-Muscle Ratio', 'Visceral-Fat', 'Existential Cadence']

}

# Assign colors to nodes

def assign_colors():

color_map = {

'yellow': ['Gate-Nutrition'],

'paleturquoise': ['Injure It', 'Basal Metabolic Rate', 'Refine-Training', 'Existential Cadence'],

'lightgreen': ["Move It", 'Weekly-Calendar', 'Hydration', 'Visceral-Fat', 'Fat-Muscle Ratio'],

'lightsalmon': ['Nourish It', 'Know It', 'Prioritize-Lifestyle', 'Unstructured-Intense', 'NexToken Prediction'],

}

return {node: color for color, nodes in color_map.items() for node in nodes}

# Define edge weights (hardcoded for editing)

def define_edges():

return {

('Foundational', 'Gate-Nutrition'): '1/99',

('Grammar', 'Gate-Nutrition'): '5/95',

('Nourish It', 'Gate-Nutrition'): '20/80',

('Know It', 'Gate-Nutrition'): '51/49',

("Move It", 'Gate-Nutrition'): '80/20',

('Injure It', 'Gate-Nutrition'): '95/5',

('Gate-Nutrition', 'Prioritize-Lifestyle'): '20/80',

('Gate-Nutrition', 'Basal Metabolic Rate'): '80/20',

('Prioritize-Lifestyle', 'Unstructured-Intense'): '49/51',

('Prioritize-Lifestyle', 'Weekly-Calendar'): '80/20',

('Prioritize-Lifestyle', 'Refine-Training'): '95/5',

('Basal Metabolic Rate', 'Unstructured-Intense'): '5/95',

('Basal Metabolic Rate', 'Weekly-Calendar'): '20/80',

('Basal Metabolic Rate', 'Refine-Training'): '51/49',

('Unstructured-Intense', 'NexToken Prediction'): '80/20',

('Unstructured-Intense', 'Hydration'): '85/15',

('Unstructured-Intense', 'Fat-Muscle Ratio'): '90/10',

('Unstructured-Intense', 'Visceral-Fat'): '95/5',

('Unstructured-Intense', 'Existential Cadence'): '99/1',

('Weekly-Calendar', 'NexToken Prediction'): '1/9',

('Weekly-Calendar', 'Hydration'): '1/8',

('Weekly-Calendar', 'Fat-Muscle Ratio'): '1/7',

('Weekly-Calendar', 'Visceral-Fat'): '1/6',

('Weekly-Calendar', 'Existential Cadence'): '1/5',

('Refine-Training', 'NexToken Prediction'): '1/99',

('Refine-Training', 'Hydration'): '5/95',

('Refine-Training', 'Fat-Muscle Ratio'): '10/90',

('Refine-Training', 'Visceral-Fat'): '15/85',

('Refine-Training', 'Existential Cadence'): '20/80'

}

# Calculate positions for nodes

def calculate_positions(layer, x_offset):

y_positions = np.linspace(-len(layer) / 2, len(layer) / 2, len(layer))

return [(x_offset, y) for y in y_positions]

# Create and visualize the neural network graph

def visualize_nn():

layers = define_layers()

colors = assign_colors()

edges = define_edges()

G = nx.DiGraph()

pos = {}

node_colors = []

# Create mapping from original node names to numbered labels

mapping = {}

counter = 1

for layer in layers.values():

for node in layer:

mapping[node] = f"{counter}. {node}"

counter += 1

# Add nodes with new numbered labels and assign positions

for i, (layer_name, nodes) in enumerate(layers.items()):

positions = calculate_positions(nodes, x_offset=i * 2)

for node, position in zip(nodes, positions):

new_node = mapping[node]

G.add_node(new_node, layer=layer_name)

pos[new_node] = position

node_colors.append(colors.get(node, 'lightgray'))

# Add edges with updated node labels

for (source, target), weight in edges.items():

if source in mapping and target in mapping:

new_source = mapping[source]

new_target = mapping[target]

G.add_edge(new_source, new_target, weight=weight)

# Draw the graph

plt.figure(figsize=(12, 8))

edges_labels = {(u, v): d["weight"] for u, v, d in G.edges(data=True)}

nx.draw(

G, pos, with_labels=True, node_color=node_colors, edge_color='gray',

node_size=3000, font_size=9, connectionstyle="arc3,rad=0.2"

)

nx.draw_networkx_edge_labels(G, pos, edge_labels=edges_labels, font_size=8)

plt.title("Pearls & Irritations", fontsize=25)

plt.show()

# Run the visualization

visualize_nn()

Fig. 20 Change of Guards. In Grand Illusion, Renoir was dealing the final blow to the Ancién Régime. And in Rules of the Game, he was hinting at another change of guards, from agentic mankind to one in a mutualistic bind with machines (unsupervised pianos & supervised airplanes). How priscient! Fox and our papers are the only faintly conservative voices against the monolithic liberal media. I believe maintaining this is vital to the future of the English-speaking world. Source: Pearls & Irritations#